Reading time: 5m 57s

Every now and then, a 20-year-old builds something so good that billionaires come knocking at the door with checkbooks in hand.

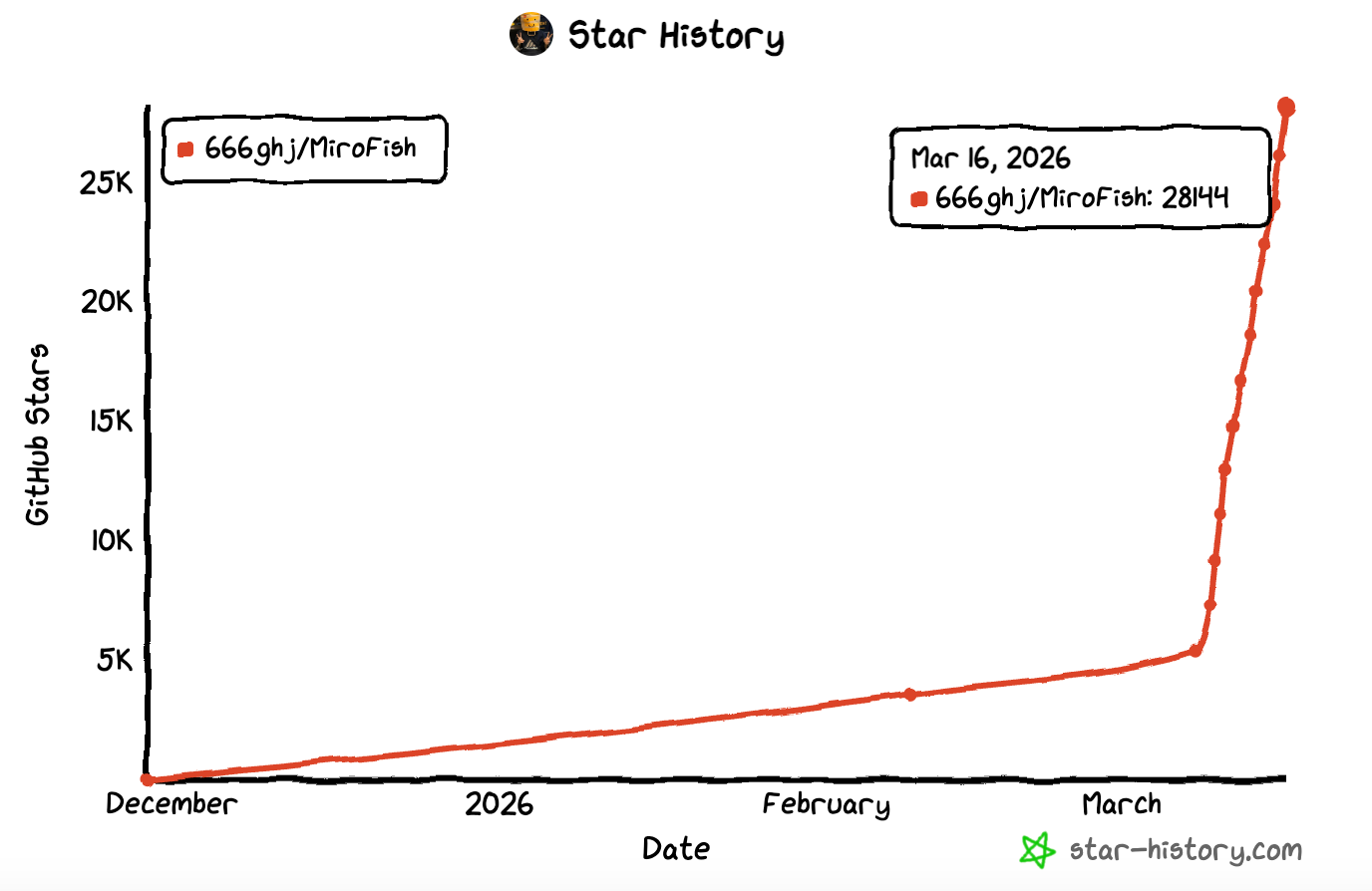

MiroFish, billed as "a simple and universal swarm intelligence engine, predicting anything,” managed to do exactly that when it topped GitHub's global trending list last week.

It’s racked up over 28k stars and 3.4k forks in almost no time.

What makes the story even more intriguing is who built it: Guo Hangjiang, aka BaiFu, a senior UG at Beijing University of Posts and Telecommunications.

The origin story

MiroFish did not emerge from a vacuum. Its direct predecessor, BettaFish, was a multi-agent public opinion analysis tool that Guo had developed a few months back for his graduation project.

Once the base was set, he kept vibe coding and tweaking logic with the help of AI tools. The whole process, from ideation to the actual rollout, only took ten days.

BettaFish climbed to the top of GitHub’s trending charts on the heels of its launch.

Guo said, "I was really excited at first.”

But that feeling didn’t last for long. He was slowly getting "desensitized."

Guo added, "After reaching 10k, I kind of lost the feeling.”

While BettaFish looked backward, digging into the past, MiroFish looks forward, attempting to simulate what happens next.

The end-point of one fish became the starting-point of the other.

All this caught the eye of Chen Tianqiao, founder of Shanda Group, one of China's early internet giants.

Interestingly, during its peak in 2004, Shanda was the country's largest internet company by market capitalization.

Chen was impressed by Guo's ability to identify and find solutions to actual problems with the help of AI.

Right after he submitted a rough demo video of MiroFish, Chen committed 30 million yuan (~$4 million) to support the project's development.

Chen didn’t flinch because MiroFish represents the direction he has always valued: making AI move from simply "answering questions" to truly "solving problems."

How to get started

All you’ll need Node.js 18+, Python 3.11+, uv (Python package manager), an LLM API key, and a Zep Cloud key.

Once that’s sorted, you’ll just have to clone and configure, edit the .env file with your API keys, install everything, and run it.

How MiroFish works

At its core, MiroFish is a next-gen prediction engine built on multi-agent simulation.

Instead of feeding numbers into a statistical model and receiving a probability in return, MiroFish builds a miniature society and watches what happens.

The process begins with something called "seed material." This can be almost anything: social media sentiment summaries, breaking news articles, policy docs, financial reports, research theses, or even works of fiction.

All you have to do is upload the seed material, describe your prediction question in plain natural language: "How might public sentiment evolve over the next three months?" or "What happens in the second half of this story?" and set MiroFish in motion.

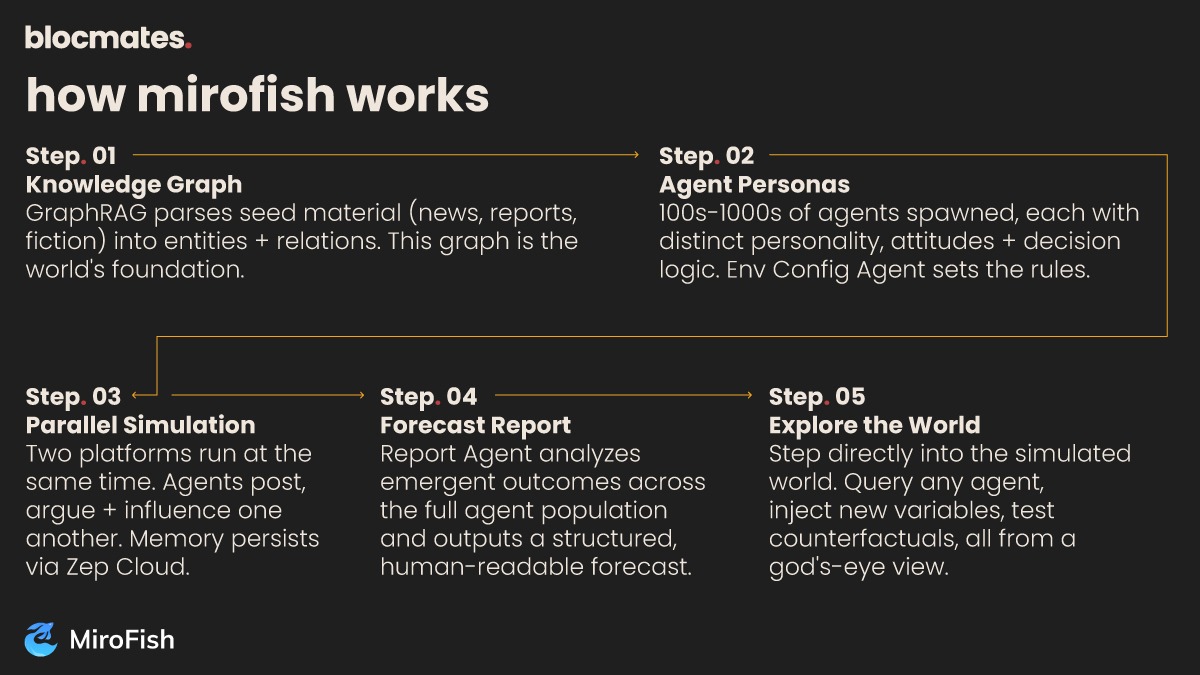

From there, it’s a quick five-stage process.

- The system uses GraphRAG to dive into the seed material and construct a dynamic knowledge graph. It extracts entities, i.e., people, institutions, events, relationships, and maps how they connect.

This graph becomes the foundation of the simulated world.

- MiroFish generates hundreds/thousands of agent personas from that graph, each with different personalities, backgrounds, sets of attitudes, and decision logic.

An Environment Configuration Agent then sets the rules of engagement for the world these agents will inhabit.

- The simulation runs on two parallel platforms at the same time. Agents post, argue, form opinions, change their minds, and influence one another in real time.

Their memories keep updating with time, using Zep Cloud for persistent long-term storage. This means agents can recall earlier rounds and tweak their behavior accordingly.

- Once the simulation concludes, a dedicated Report Agent analyzes the emergent outcomes and produces a structured, human-readable forecast combining what the agent population collectively arrived at.

- This is the step where MiroFish distinguishes itself from a typical report generator. You can step into the simulated world directly.

You’re allowed to query individual agents, interrogate the Report Agent for deeper analysis, and integrate new variables mid-run to test counterfactual scenarios.

The simulation is not a black box; it is an interactive environment navigable from a "god's-eye view."

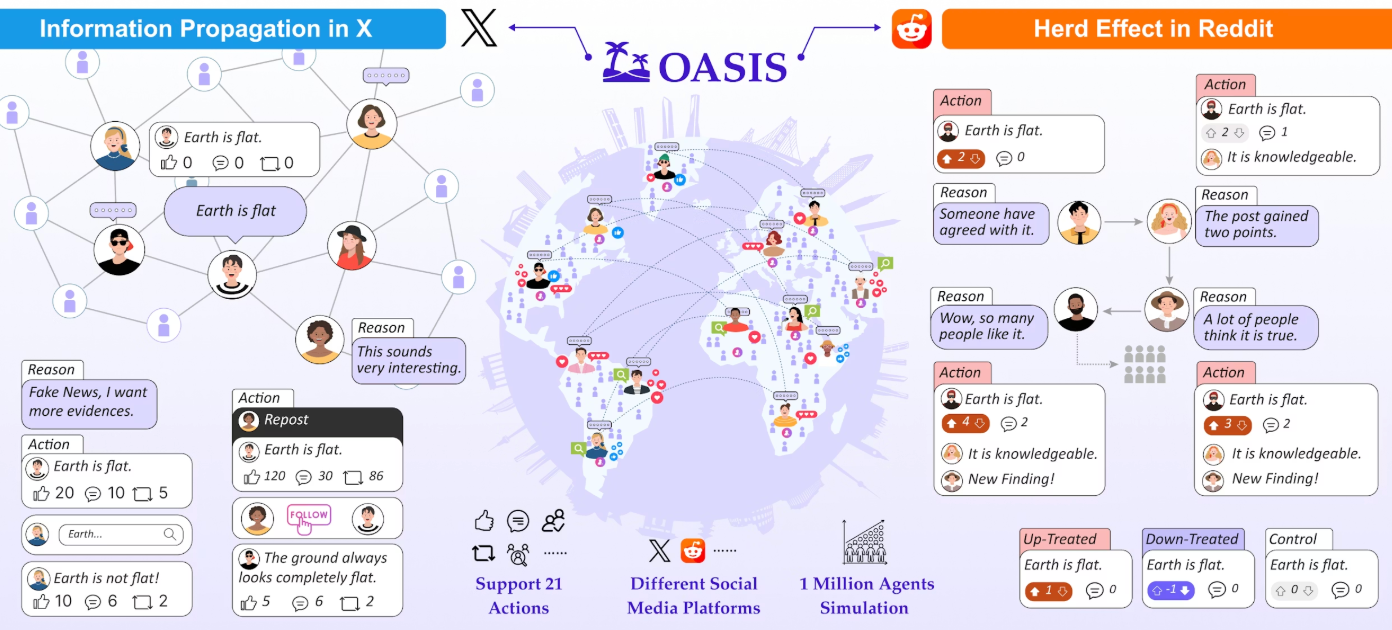

The system runs on any OpenAI-compatible LLM backend and is powered by OASIS (Open Agent Social Interaction Simulations), an open-source framework from the CAMEL-AI research community.

Under the framework, agents can realistically mimic the behavior of up to one million users on platforms like Twitter and Reddit.

Demo exhibits

MiroFish's two published demo cases add credibility to the range of problems these agents can address.

The first revolves around a study in public opinion dynamics at Wuhan University. MiroFish was fed a structured opinion report generated by BettaFish and tasked with simulating how sentiment would shift over the next few weeks following a campus controversy.

The simulation produced a time-sequenced trajectory of opinion change, showing not just where sentiment ended up but how different factions formed, shifted, and influenced one another along the way.

In another demo, the team fed MiroFish the first 80 chapters of Dream of the Red Chamber, an ancient Chinese literary masterpiece without a credible ending, and asked the system to simulate how the story might have concluded.

Thousands of agents, each representing a different character with personality, relationships, and memories drawn from the text, played out the remaining arc.

It wasn’t a speculative ending generated by a single language model's prose. Instead, the output was generated by the collective social dynamics of a simulated cast interacting over fictional time.

Still plenty of fish to fry

MiroFish is genuinely exciting as a product, but let’s not pretend that it's more mature than it is.

It’s still at square one, trying to find its footing.

The most significant challenge, imo, is validation. A simulation can produce very convincing output without being predictively accurate.

If you ask me, when output is rich and elaborate, evaluating its validity becomes an exercise that's easier to skip than to perform.

The OASIS framework brings academic credibility, but at the end of the day, LLM-based agent behaviour does not necessarily mirror how actual humans act. Small deviations here and there in initial conditions could end up producing wildly different outcomes.

Then there are also practical constraints. The system is currently optimized for macOS; Windows compatibility is still being tested.

And of course, you have the cost factor. Running meaningful simulations of 800 to 1,200 agents over 30 to 50 rounds costs real money in API tokens, which isn’t always feasible.

That being said, there are issues that are being solved as and when they arise.

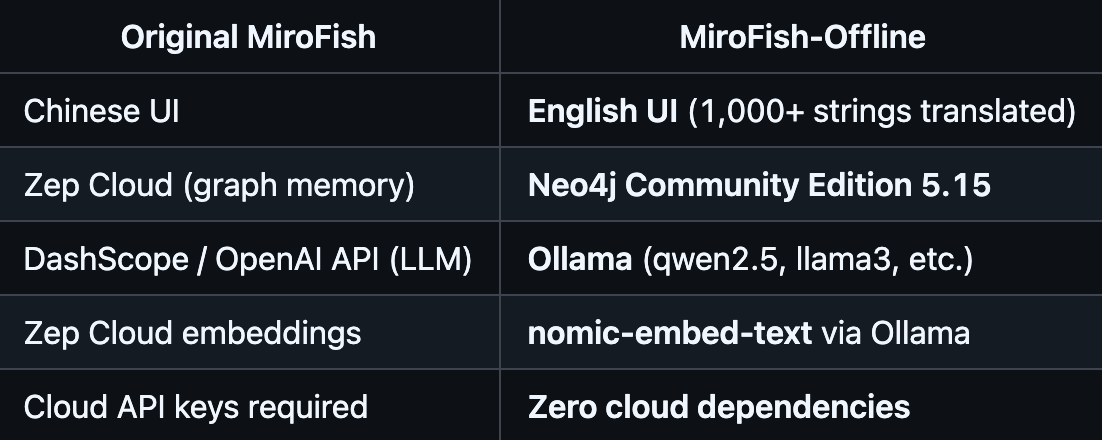

You’d normally think self-hosting requires a working LLM endpoint, which means offline operation for MiroFish is out of reach for now.

But that isn’t really the case. The MiroFish-Offline fork replaces the cloud dependencies with a fully local stack (using Ollama to serve the LLM locally and Neo4j Community Edition for the knowledge graph), enabling the entire system to run without any external API calls.

Use-case-wise, the possibilities are endless. Spitballing a few off the top of my head:

- Trading signal generation

- Market sentiment analysis

- Prediction market outputs

- Options volatility scenario modeling

- Policy impact analysis

- Election outcomes

- Product launches

- Infra stress testing

- Corporate scenario planning

- Sanction simulations

- Legal case outcome predictions

- Competitor response simulations

In each case, the core need is the same: understanding how a complex population of actors with different motivations will respond to a change in conditions.

You name it, and MiroFish can help you by building a living world model and letting you stress-test it before reality does.

Bottom line

MiroFish's existence says a lot about the current state of AI tooling.

A single undergrad student, working alone with a laptop and AI coding assistants, built a functional multi-agent simulation platform within the blink of an eye that outpaced open-source projects from major tech firms on the world's largest dev platform.

Knowledge grounding, persistent agent memory, parallel simulation, and interactive post-simulation exploration are game-changing features.

MiroFish offers a glimpse of how quickly the barriers to building complex systems are eroding.

A single dev can now spin up tools/platforms/apps that once took full research teams.

From here, the bottleneck shifts. It’s less about what's buildable and more about who has the ideas.

As always, may the best ideas keep winning.

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

%20(1).webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)